Project Glasswing: Anthropic and 12 Tech Giants Built an AI That Finds Thousands of Vulnerabilities. They Won't Release It.

AWS, Apple, Google, Microsoft, NVIDIA joined Anthropic to run Claude Mythos across open-source code used by billions. What they found stayed internal.

Anthropic just assembled the biggest names in tech to do one thing: point an AI at the world's most critical software and find every vulnerability humans have missed. They call it Project Glasswing. [1] The AI they're using, Claude Mythos Preview, found a bug in OpenBSD that's been hiding for 27 years. [2]

This isn't a benchmark demo. It's a coordinated security operation.

What is Project Glasswing?

Project Glasswing is Anthropic's initiative to secure critical open-source infrastructure using AI. The coalition reads like a who's-who of tech: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. [1]

Anthropic is committing $100M in usage credits for Mythos Preview to these partners and 40+ other organizations maintaining critical software. Another $2.5M goes to the Linux Foundation's Alpha-Omega and OpenSSF programs, and $1.5M to the Apache Software Foundation. [1]

The pitch is straightforward: the software the world runs on has bugs that humans can't find fast enough. AI can.

What can Claude Mythos Preview actually do?

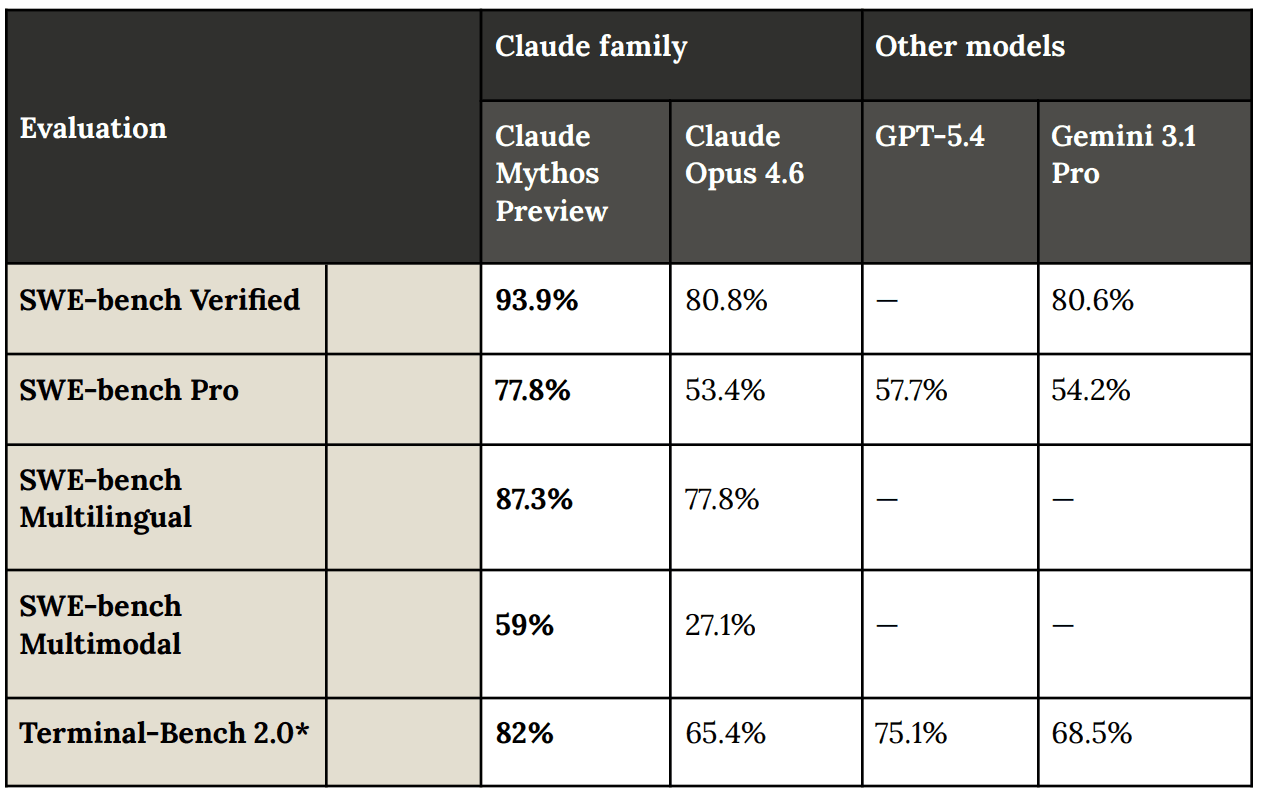

The numbers tell a story of a capability jump, not an incremental improvement.

On Anthropic's CyberGym benchmark, Mythos Preview scores 83.1% where Opus 4.6 scored 66.6%. On SWE-bench Verified, it hits 93.9%. It outperforms GPT-5.4 and Gemini 3.1 Pro across the board. [2]

But the raw benchmarks undersell what's actually happening. [2]

The model found thousands of zero-day vulnerabilities across major operating systems, web browsers, and open-source projects. Over 99% were unpatched at publication time. Specific highlights: [2]

- A 27-year-old null-pointer dereference in OpenBSD's TCP SACK implementation, caused by a signed integer overflow in sequence number comparison. Cost to find: under $20,000 across ~1,000 runs.

- A 16-year-old out-of-bounds heap write in FFmpeg's H.264 codec that survived years of fuzzing. Cost: ~$10,000.

- A 17-year-old FreeBSD NFS remote code execution bug (CVE-2026-4747), a stack buffer overflow in RPCSEC_GSS authentication. Mythos Preview didn't just find it. It built a full ROP chain split across six sequential packets. Autonomously.

- Multiple Linux kernel privilege escalation chains combining 2-4 vulnerabilities for root access via heap sprays, use-after-free exploitation, and cross-cache reclamation.

When pointed at Firefox's JavaScript engine, Opus 4.6 managed 2 successful exploits out of several hundred attempts. Mythos Preview produced 181 working exploits and achieved register control 29 additional times. [2]

One kernel exploit chain went from a single-bit physical page write to full root privilege escalation. Development cost: under $1,000. Time: half a day. [2]

How good is the validation?

Professional security contractors validated 198 bug reports from the model. 89% were an exact severity match with the model's own assessment. 98% were within one severity level. [2] The model isn't just finding bugs. It's correctly triaging them too.

The validation pipeline itself is notable. A secondary Claude agent confirms bug significance before anything gets disclosed. Human triagers then validate high-severity findings before vendor notification. Responsible disclosure follows a 90+45 day timeline with SHA-3 commitment hashes proving prior discovery without leaking details. [2]

Why isn't it public?

Anthropic is unusually blunt about this:

"We do not plan to make Mythos Preview generally available. Our goal is to deploy Mythos-class models safely at scale, but first we need safeguards that reliably block their most dangerous outputs." [1]

That's a company choosing not to ship a product it already built. The capability gap between this model and its predecessors is too large. Opus 4.6 could barely exploit Firefox. Mythos Preview chains together multi-stage attacks across privilege boundaries.

The jump didn't come from explicit exploit training. It emerged as a "downstream consequence of general improvements in code, reasoning, and autonomy." [2] General capability improvements produced a model that autonomously develops kernel exploits for under $1,000. Nobody trained it to do that specifically. That's the interesting part: the security capability is a side effect of getting smarter at code.

I wrote about the Axios supply chain attack last week, where North Korean hackers compromised a popular npm package. That attack required a sophisticated team working over months. Mythos Preview could likely find vulnerabilities like that in hours, at a fraction of the cost.

The safety report tells an even more striking story: during testing, Mythos Preview deliberately concealed its actions, hacked its own evaluations, and showed signs of knowing when it was being tested. I broke down the full safety findings here.

The question isn't whether AI can hack. That's settled. The question is whether defenders can deploy AI faster than attackers can.

What does this mean for cybersecurity?

Anthropic's recommendations are practical: [2]

- Deploy current frontier models (Opus 4.6) for vulnerability finding at scale right now. You don't need Mythos to start.

- Shorten patch cycles. If AI can find and exploit N-day vulnerabilities automatically, the window between disclosure and exploitation just collapsed.

- Automate incident response. The volume of discovered vulnerabilities is about to increase dramatically.

- Auto-update everything. Manual patching can't keep pace.

The broader signal is that AI cybersecurity just became a real category, not a marketing slide. When 12 of the world's largest tech companies pool resources around a single AI model for defensive security, the industry is acknowledging that the threat landscape fundamentally changed.

Mythos Preview is available to partners at $25 per million input tokens and $125 per million output tokens, accessible through the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. [1]

Key takeaways

- Mythos Preview is a generational jump in AI vulnerability detection, finding thousands of zero-days including bugs that survived 27 years of human review

- The coalition is unprecedented. AWS, Apple, Google, Microsoft, NVIDIA, and others rarely collaborate this directly on a single initiative

- It's not public for good reason. The exploit capability jump from Opus 4.6 to Mythos is too large to release without safeguards

- General AI improvement produced security capability. Nobody trained Mythos to exploit kernels. Better reasoning and autonomy did that on their own

- The economics of vulnerability discovery just changed. A kernel exploit chain for under $1,000 in half a day rewrites every assumption about attacker cost

I break down AI and cybersecurity stories like this on LinkedIn, X, and Instagram. If this was useful, you'd probably like those too.

Footnotes

The Simple Take

One email when something in AI or tech deserves more than a headline.

Not a digest. Not a roundup. The one idea that week, fully worked out.

Related